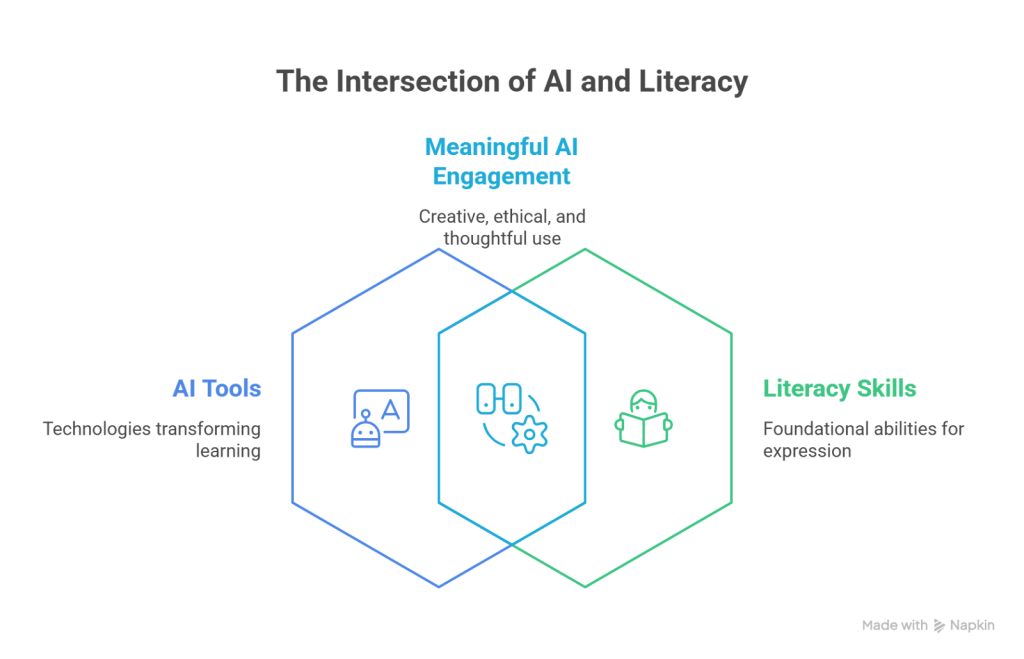

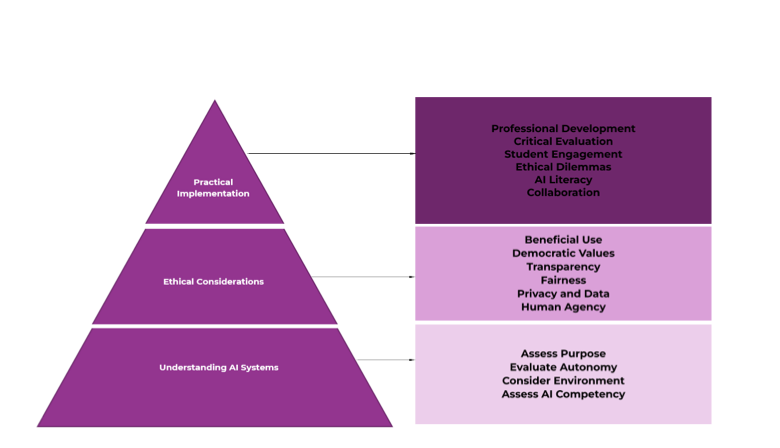

In a previous article titled AI vision in Higher Education: Toward a critical AI literacy at the University of Groningen, we discussed the importance of fostering critical AI literacy among both higher education (HE) instructors and students. We also described the process carried out at the Faculty of Science and Engineering (FSE), where a group of 33 participants—including instructors, students, and other faculty members—collaborated to develop what we call the “AI literacy vision document.” This document outlines our understanding of critical AI literacy as the set of competencies needed to evaluate, communicate with, and work alongside AI technologies. In addition, it offers practical guidelines for designing courses and programmes that aim to cultivate these competencies.

In that same article, we stressed the need to go beyond definitions and strategic vision. If we are to promote critical AI literacy in meaningful ways, we must also create concrete training opportunities. This is precisely the goal we embraced at the Centre for Learning and Teaching (CLT) after completing the AI literacy vision document. It is also directly aligned with the aim of the EU-funded INFINITE project, particularly within Work Package 4: to support HE instructors in developing AI literacy skills through capacity-building courses.

In this article, we present one of the courses developed as part of this project, which has already been implemented at our faculty. Titled Exploring limits and possibilities in course design with AI, this course marked an important step toward equipping instructors with the tools and mindset necessary to engage with AI in thoughtful, informed, and critical ways.Rooted in socioconstructivist learning theories and drawing inspiration from challenge-based and inquiry-based pedagogies, the course was designed not as a technical training, but as an open space for reflection and dialogue. Its main goal was to invite instructors to explore both the potential and – perhaps more importantly – the limitations of generative AI tools when used in the context of lesson plan design.

To support this objective, we structured the course into three main sections, each focusing on a specific sub-goal and building progressively on the previous one:

• Section one – Lesson plan design: Art or engineering process? This opening section invites participants to reflect on how they currently design lesson plans in their own teaching practice and what kinds of knowledge this process requires. The underlying idea is: before using AI tools for lesson planning, it is essential to understand how we design without them, what scientific research says about effective instructional design, and what professional knowledge educators need for designing lessons.

• Section two – AI in lesson plan design: Myth or reality? Building on the first section, this part of the course shifts the focus to generative AI. It encourages participants to consider how they interact with these tools and, more importantly, how to critically evaluate the quality, relevance, and educational value of the AI-generated content.

• Section three – What does the literature say about AI-generated lesson plans? The final section explores the risks, and limitations involved in using AI for educational design. This discussion is informed by recent academic research, which helps participants move beyond personal impressions and engage with evidence-based perspectives.

Nex, we take a closer look at how each part of the course was implemented in practice.

The first section of the course consists of three tasks, each designed to prompt reflection on personal teaching practice and lay the groundwork for a deeper understanding of what lesson design entails.

In Task 1, participants are asked to individually design a short lesson plan consisting of 5–7 activities. They are free to choose the topic and target group, based on their own expertise and teaching context. Importantly, they are instructed not to use any digital tools or AI support for this task. Instead, they rely solely on their professional experience, intuition, and creativity.

Task 2 is also completed individually and focuses on meta-reflection. Participants are asked to think carefully about the process they followed while designing their lesson plan. Specifically, they describe the steps they believe they took (e.g., “First I thought about the topic, then the learning objectives, then the activities…”) and begin to surface the implicit logic behind their design choices.

Task 3 brings participants together in small groups (3–4 members) to share and discuss their lesson plans and the design processes they followed. Based on these exchanges, each group collaboratively develops what they consider an “ideal” model for lesson plan design: a systematic approach that outlines clear steps and their intended order.

The rationale behind these tasks mirrors the evolution of the instructional design field itself. Initially, lesson planning was viewed as an intuitive and artistic process, grounded in individual creativity and experience. Over time, however, the field began to adopt a more systematic, evidence-based approach, similar to engineering design. Today, instructional design is increasingly recognised as having a dual nature: it is an art that requires creativity and intuition, but it is also considered an engineering process that uses knowledge from educational science research in a systematic way.

To close this section, the course instructor facilitates a dialogic discussion in which participants are introduced to recognised models of instructional design, grounded in educational research. These models highlight the elements and types of knowledge necessary for designing effective lessons. By the end of this section, participants have developed a clearer, shared understanding of the foundations of instructional design, knowledge that is essential for critically engaging with AI-generated lesson plans in the next stages of the course.

The second section of the course focuses on using generative AI tools in practice, while also encouraging participants to think critically about them. It consists of two tasks designed to help participants learn how to interact with AI systems, but more importantly, to strengthen their ability to critically evaluate the outputs these tools generate.

In Task 4, participants return to the lesson plan they designed in section one. This time, they are asked to try to “improve” it, keeping in mind that what counts as an improvement can vary depending on the context and personal judgment. To do so, they select a Large Language Model (LLM) of their choice and follow three steps: (1) formulate a prompt they believe will help improve their lesson plan, (2) enter the prompt into the LLM, and (3) critically analyse the AI-generated output. For this task, participants are provided with a scaffolding table to help organise their analysis, but no predefined evaluation criteria are shared. Instead, they are encouraged to rely on their own professional judgment to assess the strengths and weaknesses of the output.

After completing the task, we have a group discussion where participants share their reflections. Together, we talk about the possible benefits and limitations of using generative AI in lesson design. We also try to identify the criteria—often not clearly stated—that they used to judge the AI-generated content. This discussion helps participants better understand their own teaching values and how they make decisions when designing lessons.

Task 2 builds on this experience with a more guided approach. Participants are introduced to commonly used frameworks for prompt design, many of which are recommended by AI companies. They are then asked to repeat the process: generating a new prompt, submitting it to the AI system, and critically analysing the output. This time, however, they are provided with two supports: the same scaffolding table from Task 1, and an additional document containing guiding questions for analysis. These questions are directly connected to the instructional design elements introduced in section one. By this stage, participants are expected not only to use AI tools more strategically, but also to evaluate their use in a more systematic and pedagogically grounded manner.

To conclude, the third section introduces an ethical dilemma associated with the use of generative AI tools: biases. This section follows the analysis of AI-generated outputs carried out in the previous tasks, where participants discussed both the strengths and limitations of these tools. Building on that discussion, the aim is now to raise awareness about how biases are embedded in AI systems and why this matters for education. We begin with a brief explanation of how Large Language Models work, placing special emphasis on how biases are generated during their development and training. We then connect these issues to the educational context, introducing the concept of pedagogical bias. This refers to the way in which AI-generated content can reflect and reproduce specific pedagogical assumptions, values, or perspectives.To deepen this reflection, we present a selection of recent research studies that explore the presence of pedagogical biases in AI tools. These examples help participants recognise that the use of AI in education is not neutral and that these technologies also come with significant limitations.

As mentioned earlier, this capacity-building course is one step towards translating the idea of critical AI literacy into practice, specifically in the context of lesson plan design. It is worth noting that this is just one of several efforts we are currently undertaking. Within the CLT, we are also developing courses focused on the ethical dilemmas surrounding AI systems, academic integrity, and assessment. We encourage readers to explore these initiatives, adapt them to their own institutional settings, and reflect on what it means to engage with AI in responsible, ethical, informed, and pedagogically meaningful ways.