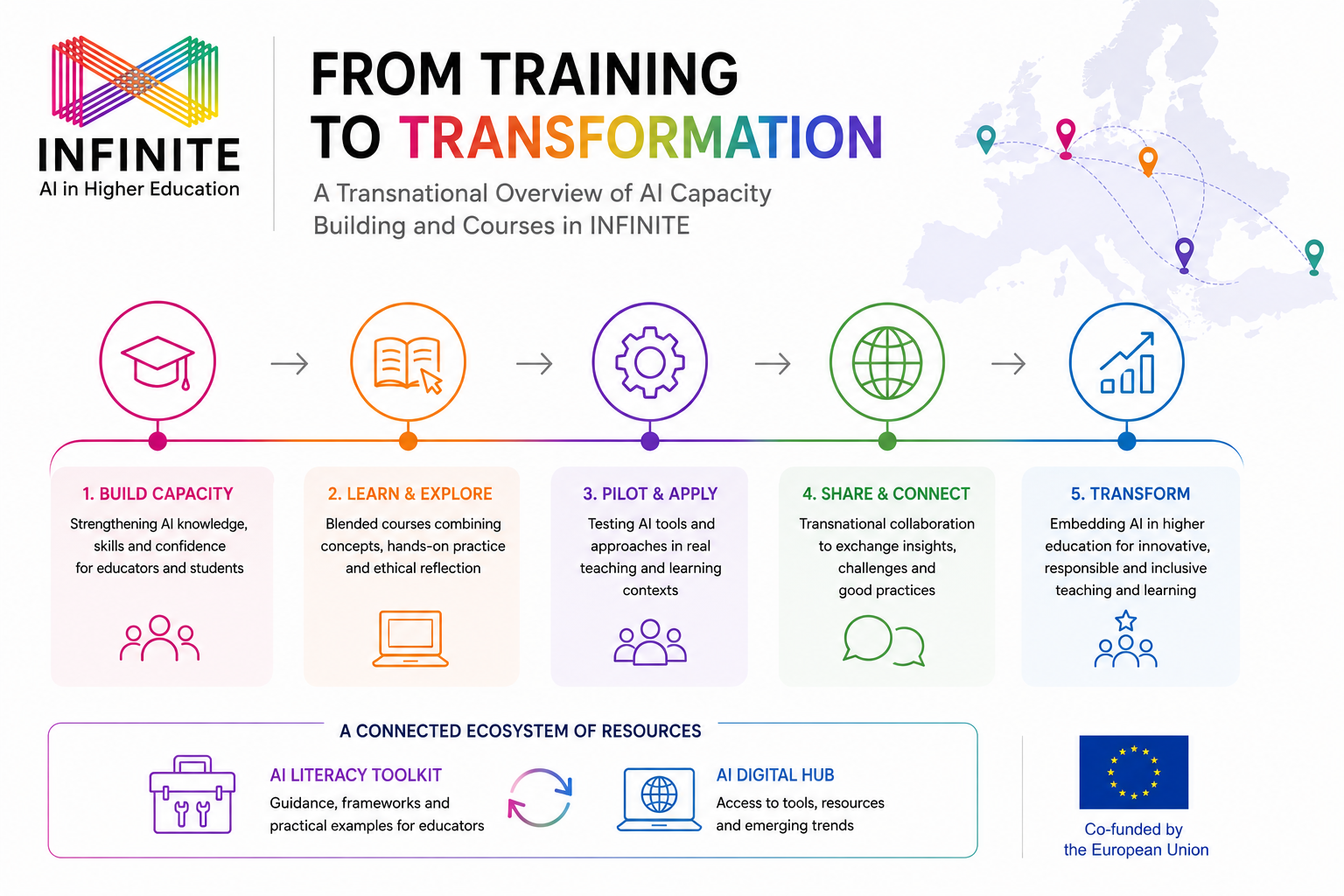

The rapid development of Artificial Intelligence (AI) has created both excitement and apprehension across global academic landscapes. This web article explores the transition from technical experimentation to digital fluency, drawing insights from the blended courses and real-classroom implementations of AI scenarios that were recently developed as part of Work Package 4 of the INFINITE (https://infinite-erasmus.eu/) Erasmus+ project. By moving beyond the initial “hype”, institutions can develop deep, sustainable AI literacy among both faculty and students.

The Power of Scenario-Based Learning

One of the most effective ways to integrate AI into Higher Education (HE) is through scenario-based pedagogy. This approach is grounded in the pedagogical tradition that emphasises constructivism and experiential learning, as theorised by authors such as Jean Piaget and Lev Vygotsky (Della Volpe, 2024).

According to Piaget, learning is an active process occurring through the interaction between the individual and their environment. Similarly, Vygotsky highlights the importance of social context, arguing that knowledge is acquired through dialogue and collaboration. Scenario-based learning applies these principles by creating environments that require active participation, placing students in complex situations where they must solve problems and make decisions. (Della Volpe, 2024)

Hence, rather than teaching AI as a standalone technical subject, embedding it within these real-world academic or professional challenges allows learners to:

- Move beyond technical experimentation: In line with Piaget’s “active process”, participants transition from simply “playing” with tools to making reflective, informed decisions based on specific goals.

- Contextualise AI use: By applying AI to specific tasks—such as digital presentations creation, or research—the technology becomes a relevant partner in the learning process, facilitating the “interaction with the environment”.

- Reduce barriers to adoption: Using “discipline-neutral” scenarios that are easily adaptable helps faculty quickly customise AI activities for their specific subjects. This enhances the collaborative environment Vygotsky advocated, without requiring a deep computer science or IT background.

The Ethics-First Approach

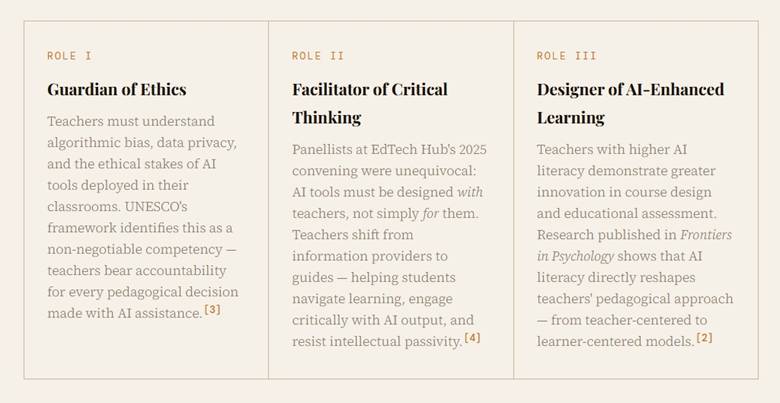

A critical pillar of developing long-term AI readiness is the systematic embedding of ethical reflection. Rather than being treated as a separate, theoretical topic, ethics should be integrated in AI-related activities. This includes:

- Critical Evaluation: Developing the ability to assess AI-generated outputs for accuracy, reliability, and potential algorithmic bias.

- Academic Integrity: Establishing clear guidance to help students understand the boundaries between “AI-assisted” work and “AI-generated” work.

- Responsible Data Practices: Promoting an awareness of data privacy and intellectual property.

Building Long-Term Capacity

Evidence from the INFINITE project implementations suggests that a blended approach—combining self-paced online learning with face-to-face collaborative application—creates the strongest foundation for AI readiness. While asynchronous courses provide the necessary theoretical framework, in-class sessions offer the pedagogical depth needed for peer discussion and real-time troubleshooting.

To sustain this growth, HE institutions must view AI literacy not as a one-time training session, but as a continuous journey of development. As technology evolves, they must ensure that the academic community remains both technically skilled and critically aware.

References

Della Volpe, V. (2024). Scenario-Based Learning: An Inclusive Methodology. Journal of Research & Method in Education, 14(6), 1-5.